Pro Tips

AI Is Now a Weapon

Feb 19, 2026

Is Your Business Protected?

Google's Threat Intelligence Group just released a quarterly report that every technology and security leader needs to read. The headline finding: government-backed threat actors are now using large language models as active weapons — not just for reconnaissance, but to generate convincing phishing lures, accelerate technical research, and identify attack paths inside organizations faster than any human red team ever could.

This isn't a future risk. It's documented, happening now, and targeting organizations just like yours.

"For government-backed threat actors, large language models have become essential tools for technical research, targeting, and the rapid generation of nuanced phishing lures." — Google Threat Intelligence Report, Late 2025

What this means in plain English: attackers now have an AI co-pilot that helps them move faster, craft more believable attacks, and find gaps in your defenses — including gaps in how your own AI systems interact with your data.

The threats documented in the report include LLM-generated phishing emails that are virtually indistinguishable from legitimate internal communications, automated vulnerability research that maps your APIs, AI agents, and data pipelines for exploitation, AI-assisted data exfiltration that bypasses traditional DLP tools by moving through legitimate channels, and the abuse of your own AI agents to relay sensitive data or escalate privileges across systems.

01 — The Root Cause: Why Your Current Controls Are Missing the Mark

Most organizations handle AI security one of two ways: they either rely on the guardrails baked into the LLM provider they use, or they cobble together a multi-tool stack — SIEM here, API gateway there, a DLP tool somewhere else — and hope the pieces talk to each other.

Neither approach is working.

The core problem: your AI systems, legacy applications, APIs, data stores, users, and AI agents don't share a single enforcement layer. Every interaction between them is a potential trust gap — and adversaries now have AI tools to find and exploit those gaps at machine speed.

LLM providers secure their models. They don't govern how your AI interacts with your CRM, your internal APIs, your databases, or your other AI agents. That's your responsibility — and right now, most businesses have no unified place to define, enforce, and audit those rules.

Building it yourself means months of engineering effort, heavy security research, code changes across dozens of systems, and ongoing maintenance every time your stack evolves. And by the time you ship, the threat landscape has already shifted.

02 — The Solution: One External Authorization Layer. Every Interaction Governed.

Control Core is an advanced external authorization platform. It sits between every component of your digital technology stack — your AI models, AI agents, legacy systems, APIs, data stores, and users — and enforces policy-based access controls on every single interaction.

No code changes. No rearchitecting. No multi-vendor integration sprawl.

When reports like Google's threat intelligence brief emerge, they don't just document what's happening — they map exactly what kinds of controls organizations need to prevent those attacks. Control Core translates those threat patterns directly into enforceable policies, available as out-of-the-box controls you can enable in minutes.

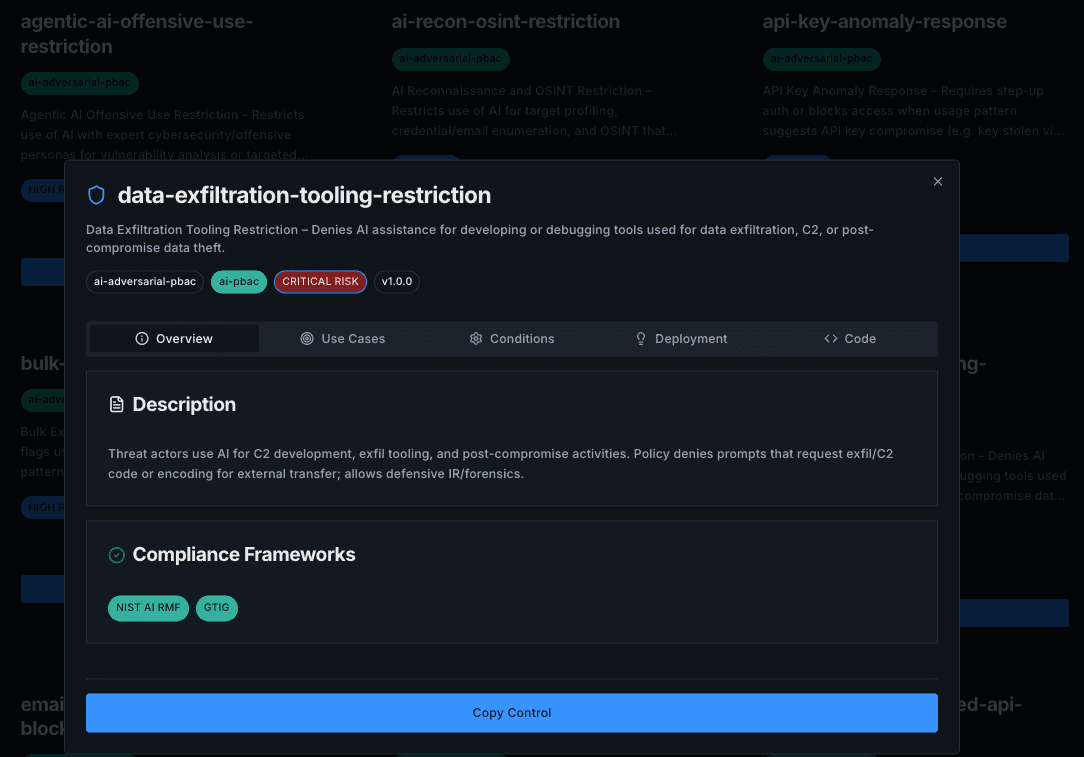

A Real Example: Data Exfiltration Tool Restriction

One of the most critical risks the Google report highlights is AI-assisted data exfiltration — where threat actors use AI agents or compromised integrations to move sensitive data out of your organization through legitimate-looking channels. This is especially dangerous because traditional DLP tools aren't built to watch AI-to-API or agent-to-agent traffic.

Here's what addressing this threat looks like in the two worlds:

The traditional path: your security team reads the threat report. They convene a working group. They map which tools, APIs, and agent integrations could be abused. They write security requirements. Engineering estimates the work — typically 6 to 16 weeks of development across multiple systems, code reviews, testing, and deployment — all before a single rule is enforced. And every time a new tool is added to your stack, the process begins again.

The Control Core path: you open the Controls library. You find Data Exfiltration Tool Restriction. You flip the toggle. Done. The policy is enforced across every relevant interaction in your stack — immediately, with zero code changes, and with a full audit trail from day one.

What That Looks Like in the Platform

📸 [Screenshot: Control Core Controls Library — AI Security tab]

Control: Data Exfiltration Tool Restriction — Enabled

Prevents AI agents and LLM integrations from invoking tools, APIs, or data connectors that could be used to exfiltrate sensitive data outside approved destinations.

Scope: All AI agents, LLM tool calls, API integrations

Threat tags: Exfiltration Risk · AI Agent · API · LLM · Data

Audit: Full interaction log

Setup time: < 2 minutes

Status: ● Active — enforcement live across stack

This is one control, enabled in under two minutes, covering a threat vector that would have taken an engineering team months to build, test, and deploy from scratch. And it's one of many out-of-the-box controls in the Control Core library — each one directly mapped to real-world attack patterns like those documented in Google's report.

03 — How Control Core Works

Deploy once, enforce everywhere. Control Core integrates as your external authorization layer — one connection point that intercepts and evaluates every interaction across your AI systems, APIs, agents, applications, and users without touching any existing code.

Define guardrails as policies, not code. Use the pre-built controls library informed by real threat intelligence, or write your own policies in plain no-code configuration. Either way, it's done in minutes, not months.

Enforce on every interaction, observe everything. Control Core enforces your policies at runtime — every AI call, every API request, every agent action, every user interaction — and generates a complete audit trail for compliance, incident response, and regulatory reporting.

Stay current as threats evolve. When new threat intelligence reports land — like Google's 2025 quarterly — Control Core's controls library is updated to reflect them. You don't re-engineer anything. You just enable what you need.

04 — The Takeaway

Threat intelligence is only valuable if you can act on it fast.

Google's report is a gift — it tells you exactly what adversaries are doing and what you need to stop. The question isn't whether to implement stronger AI guardrails. The question is whether you're going to spend six months building them from scratch or six minutes enabling them.

Your business doesn't need another point solution or another LLM provider promise. It needs one unified enforcement layer that governs every interaction across your digital technology stack — AI, agents, APIs, data, users, and legacy systems — all secured by policies you define, without modifying a single line of code.

The threat actors have their AI co-pilot. Now it's time you had your authorization layer.

Ready to see it in action?

Explore the Controls library, book a live demo, or talk to our team about how fast you can enforce your first AI guardrail.

© Control Core · controlcore.io · Advanced Authorization Platform for AI, APIs, Agents & Beyond